Of the enduring legacies from a war that changed all aspects of life—from economics, to justice, to the nature of warfare itself—the scientific and technological legacies of World War II had a profound and permanent effect on life after 1945. Technologies developed during World War II for the purpose of winning the war found new uses as commercial products became mainstays of the American home in the decades that followed the war’s end. Wartime medical advances also became available to the civilian population, leading to a healthier and longer-lived society. Added to this, advances in the technology of warfare fed into the development of increasingly powerful weapons that perpetuated tensions between global powers, changing the way people lived in fundamental ways. The scientific and technological legacies of World War II became a double-edged sword that helped usher in a modern way of living for postwar Americans, while also launching the conflicts of the Cold War.

When looking at wartime technology that gained commercial value after World War II, it is impossible to ignore the small, palm-sized device known as a cavity magnetron. This device not only proved essential in helping to win World War II, but it also forever changed the way Americans prepared and consumed food. This name of the device—the cavity magnetron—may not be as recognizable as what it generates: microwaves. During World War II, the ability to produce shorter, or micro, wavelengths through the use of a cavity magnetron improved upon prewar radar technology and resulted in increased accuracy over greater distances. Radar technology played a significant part in World War II and was of such importance that some historians have claimed that radar helped the Allies win the war more than any other piece of technology, including the atomic bomb. After the war came to an end, cavity magnetrons found a new place away from war planes and aircraft carrier and instead became a common feature in American homes.

Percy Spencer, an American engineer and expert in radar tube design who helped develop radar for combat, looked for ways to apply that technology for commercial use after the end of the war. The common story told claims that Spencer took note when a candy bar he had in his pocket melted as he stood in front of an active radar set. Spencer began to experiment with different kinds of food, such as popcorn, opening the door to commercial microwave production. Putting this wartime technology to use, commercial microwaves became increasingly available by the 1970s and 1980s, changing the way Americans prepared food in a way that persists to this day. The ease of heating food using microwaves has made this technology an expected feature in the twenty first century American home.

More than solely changing the way Americans warm their food, radar became an essential component of meteorology. The development and application of radar to the study of weather began shortly after the end of World War II. Using radar technology, meteorologists advanced knowledge of weather patterns and increased their ability to predict weather forecasts. By the 1950s, radar became a key way for meteorologists to track rainfall, as well as storm systems, advancing the way Americans followed and planned for daily changes in the weather.

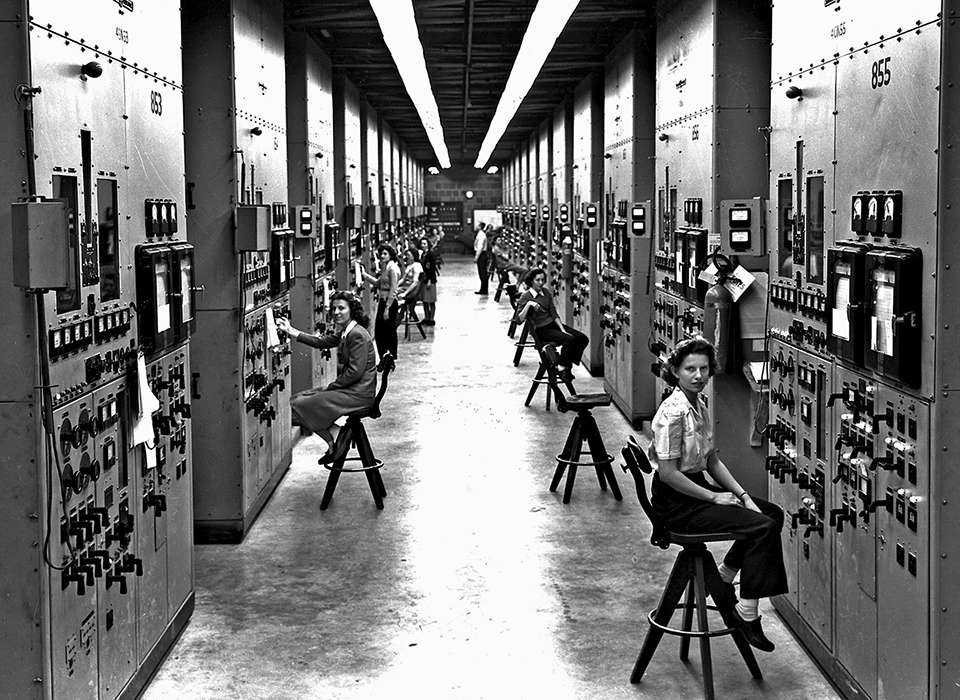

Similar to radar technology, computers had been in development well before the start of World War II. However, the war demanded rapid progression of such technology, resulting in the production of new computers of unprecedented power. One such example was the Electronic Numerical Integrator and Computer (ENIAC), one of the first general purpose computers. Capable of performing thousands of calculations in a second, ENIAC was originally designed for military purposes, but it was not completed until 1945. Building from wartime developments in computer technology, the US government released ENIAC to the general public early in 1946, presenting the computer as tool that would revolutionize the field of mathematics. Taking up 1,500 square feet with 40 cabinets that stood nine feet in height, ENIAC came with a $400,000 price tag. The availability of ENIAC distinguished it from other computers and marked it as a significant moment in the history of computing technology. By the 1970s, the patent for the ENIAC computing technology entered the public domain, lifting restrictions on modifying these technological designs. Continued development over the following decades made computers progressively smaller, more powerful, and more affordable.

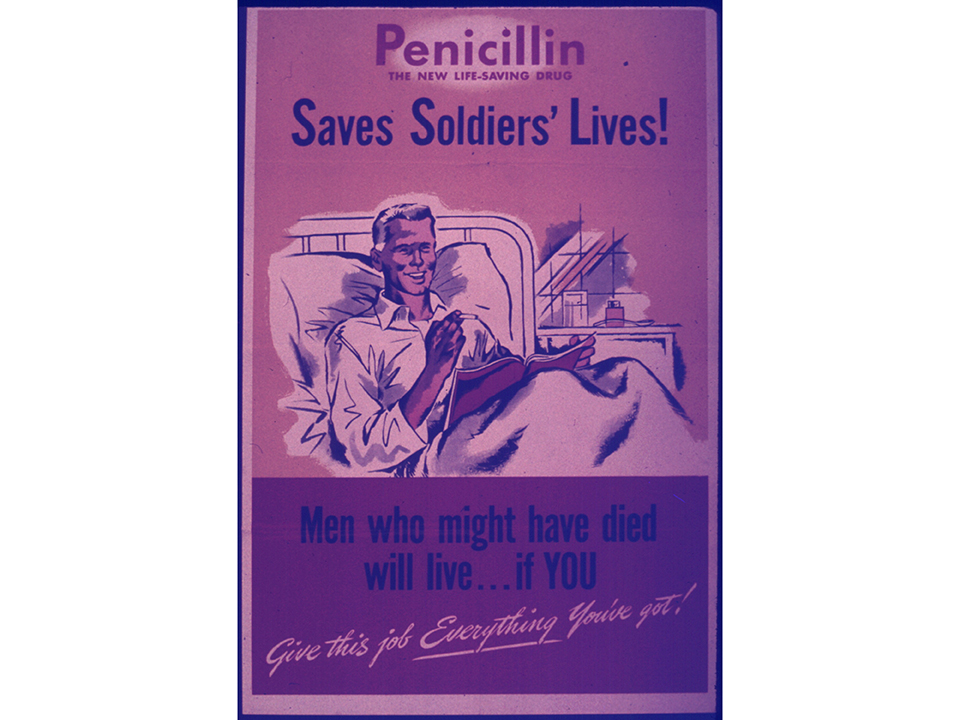

Along with the advances of microwave and computer technology, World War II brought forth momentous changes in field of surgery and medicine. The devastating scale of both world wars demanded the development and use new medical techniques that led to improvements in blood transfusions, skin grafts, and other advances in trauma treatment. The need to treat millions of soldiers also necessitated the large-scale production of antibacterial treatment, bringing about one of the most important advances in medicine in the twentieth century. Even though the scientist Alexander Fleming discovered the antibacterial properties of the Penicillium notatum mold in 1928, commercial production of penicillin did not begin until after the start of World War II. As American and British scientists worked collectively to meet the needs of the war, the large-scale production of penicillin became a necessity. Men and women together experimented with deep tank fermentation, discovering the process needed for the mass manufacture of penicillin. In advance of the Normandy invasion in 1944, scientists prepared 2.3 million doses of penicillin, bringing awareness of this “miracle drug” to the public. As the war continued, advertisements heralding penicillin’s benefits, established the antibiotic as a wonder drug responsible for saving millions of lives. From World War II to today, penicillin remains a critical form of treatment used to ward off bacterial infection.

Penicillin Saves Soldiers Lives poster. Image courtesy of the National Archives and Records Administration, 515170.

Of all the scientific and technological advances made during World War II, few receive as much attention as the atomic bomb. Developed in the midst of a race between the Axis and Allied powers during the war, the atomic bombs dropped on Hiroshima and Nagasaki serve as notable markers to the end of fighting in the Pacific. While debates over the decision to use atomic weapons on civilian populations continue to persist, there is little dispute over the extensive ways the atomic age came to shape the twentieth century and the standing of the United States on the global stage. Competition for dominance propelled both the United States and the Soviet Union to manufacture and hold as many nuclear weapons as possible. From that arms race came a new era of science and technology that forever changed the nature of diplomacy, the size and power of military forces, and the development of technology that ultimately put American astronauts on the surface of the moon.

The arms race in nuclear weapons that followed World War II sparked fears that one power would not only gain superiority on earth, but in space itself. During the mid-twentieth century, the Space Race prompted the creation of a new federally-run program in aeronautics. In the wake of the successful launch of the Soviet satellite, Sputnik 1, in 1957, the United States responded by launching its own satellite, Juno 1, four months later. In 1958, the National Aeronautics and Space Act (NASA) received approval from the US Congress to oversee the effort to send humans into space. The Space Race between the United States and the USSR ultimately peaked with the landing of the Apollo 11 crew on the surface of the moon on July 20, 1969. The Cold War between the United States and the USSR changed aspects of life in almost every way, but both the nuclear arms and Space Race remain significant legacies of the science behind World War II.

From microwaves to space exploration, the scientific and technological advances of World War II forever changed the way people thought about and interacted with technology in their daily lives. The growth and sophistication of military weapons throughout the war created new uses, as well as new conflicts, surrounding such technology. World War II allowed for the creation of new commercial products, advances in medicine, and the creation of new fields of scientific exploration. Almost every aspect of life in the United States today—from using home computers, watching the daily weather report, and visiting the doctor—are all influenced by this enduring legacy of World War II.

Like this article? Read more in our online classroom.

From the Collection to the Classroom: Teaching History with The National WWII Museum.

Kristen D. Burton, PhD

Kristen D. Burton, PhD, is a former Teacher Programs and Curriculum Specialist at The National WWII Museum.

Cite this article:

MLA Citation:

APA Citation:

Chicago Style Citation: